This post is part of a series on NServiceBus. In the previous articles, we looked at fundamental concepts for messaging and how to get started with NServiceBus. In this article, we’ll take a step back from low-level implementation details, put on our architectural glasses 🤓 and review the Web-Queue-Worker architecture. As with any architecture, we’ll examine its tradeoffs and benefits, as well as how NServiceBus makes implementing this pattern easier. We will explore its key components and how they interact to provide a robust solution for processing both web requests and background tasks.

Web-Queue-Worker Architecture Overview 🏡

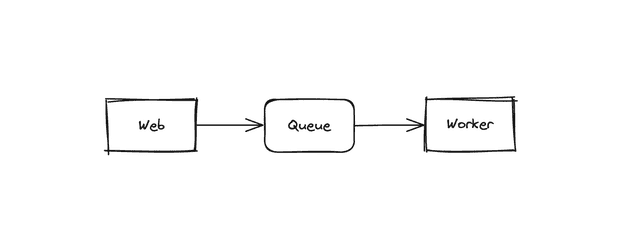

This architectural pattern typically consists of the following components:

- Web Application: The front-end application that handles user interactions and produces messages put onto a queue.

- Message Queue: A messaging system (RabbitMQ, Azure Service Bus) that acts as a buffer between the web application and the worker services. It ensures that messages are reliably delivered and processed.

- Worker Services: Background services that consume messages from the queue and perform the necessary processing. These services can be scaled independently based on the workload.

This architecture makes it easy to separate the concerns of handling web requests and processing background tasks. With this separation, the architecture allows for better scalability, maintainability, and resilience in distributed systems. Let’s explore why this works so well and some of my own personal opinions on what makes this architecture a great fit for modern applications.

Evaluating the tradeoffs of this approach ⚖️

The Web-Queue-Worker pattern improves responsiveness by offloading slow tasks to background processors, letting web requests complete quickly whenever possible. It scales elastically by growing worker capacity independently and using queues to absorb quick bursts and peak loads. Using a durable queue combined with retries, delayed delivery, and dead-letter queues, it can increase the resilience of a system. Background operations can pause, replay, or throttle processing without taking a site down, which may be a critical requirement for user facing systems in today’s business landscape. One of my favorite aspects of this architecture is that you can start small with one worker and as your application grows or SLAs change, you can add workers and route messages between them to satisfy any technical or business needs without significant rework.

As always, these gains come with some costs. The switch to multiple deployable services comes with increased complexity, such as introducing latency and breaking previously atomic (ACID) operations in favor of Eventual Consistency, so users may need improved UX considerations like progress indicators and toast notifications. Concerns like idempotency, deduplication, and ordering all become important design considerations for message handlers. Operational overhead ($$$) increases as you provision and monitor queues and worker hosts. Troubleshooting inevitable bugs 🐛 gets harder as messaging pipelines evolve, creating the need for correlation and distributed tracing (use OpenTelemetry!), and evolving message contracts requires careful versioning.

Luckily, if you choose to use NServiceBus for the messaging pipeline of this architecture, you can immediately see these benefits while mitigating the downsides since features like the Outbox and Unit of Work patterns, deduplication, retries and recoverability, direct integration with OpenTelemetry for observability, and the Particular Service Platform for common operational concerns are all built-in. We’ve covered most of these topics in previous articles in this series if you would like to brush up on how NServiceBus facilitates them.

Reflecting on the Web-Queue-Worker Architecture 🪞

This approach of using a message queue for communication between two services is what I consider to be a building block for, or at least a foundation component, of distributed systems as it lays the groundwork for more advanced patterns like event sourcing and CQRS. In the same way a well-structured monolith is a great building block for most web applications, the Web-Queue-Worker architecture pattern is a solid starting point for building event-driven architectures, microservices, and distributed systems. This architecture implemented alongside NServiceBus provides a great starting point for teams that are just getting started with messaging-based systems and gives them the introduction they need to grow these systems over time. I’ve also seen this architecture implemented as a cohesive unit of services, like an architectural bounded context, in a larger set of microservices applications spanning an enterprise wide system. In that case, this pattern worked well for focusing on it’s own domain and growing to provide adapters to and integrations with other systems.

If you’ve been following along with NimblePros’ series on NServiceBus, this whole concept may sound familiar as we mentioned a similar sample in a previous article, Getting Started with NServiceBus. In that article, we explored the basics of NServiceBus and how it can be used to implement messaging patterns in .NET applications. We started with an identical architecture (you’ll notice the architecture diagrams look very similar 😁), sending a command from a web API to a queue which was then processed by a worker service.

Real world applications 🌎

In an ideal world, we should aim to build all the functionality into this monolith application. Modular monoliths are a great starting point or target for refactoring existing applications. Rather than jumping directly into event-driven architecture and microservices, most projects can benefit from starting simple with a monolith and gradually evolving towards a more distributed architecture as needed. While the aim of this post isn’t to sell you on the monolithic approach, it’s important to mention where we’re coming from as it’s an architecture most developers work with often. Of course this comes with some nuance, but anecdotally I’ve rarely seen a software project “fail” because someone chose a well-structured monolith with clear boundaries as their initial architecture, even if those boundaries aren’t perfect. This approach allows teams to focus on delivering value quickly while laying the groundwork for future scalability.

Every choice is a trade off, and it’s important to understand the needs and requirements of what you’re building. If you can justify that you’re going to need the scalability and flexibility of a distributed architecture in the future, it may be worth considering the Web-Queue-Worker pattern earlier in the design process. But we’ll start with something simple for now in our example (and you probably should too).

Exploring a sample use case 🗺️

Let’s consider an application that will have users who can browse products, add them to their cart, and place orders, similar to eShopOnWeb. Initially, all of this functionality will be implemented within a single monolithic application. The application will handle user authentication, product catalog management, shopping cart functionality, and order processing all within the same codebase.

As the application grows, we may find that certain areas become bottlenecks or require more scalability. For example, the order processing functionality may need to handle a large volume of requests during peak shopping times. Perhaps we’ve found that the notification emails that get sent out after an order is placed are a performance bottleneck or resulting in failures. This can lead to orders being placed with no notification emails if the data is persisted but the email fails to send (aka zombie records). At a code-level, emails sending might have been located directly in MediatR handlers next to domain code. Sending emails might have been located in Domain Events but we’ll keep things simple for now. In many CRUD applications, that code might have looked something like this:

public class CreateOrderHandler : IRequestHandler<CreateOrderCommand, Order>

{

private readonly IEmailSender _emailSender;

private readonly IRepository<Order> _repository;

public CreateOrderHandler(IEmailSender emailSender, IRepository<Order> repository)

{

_emailSender = emailSender;

_repository = repository;

}

public async Task<Order> Handle(CreateOrderCommand request, CancellationToken cancellationToken)

{

var order = new Order

{

UserId = request.UserId,

Items = request.Items,

Total = request.Total

};

await _repository.CreateAsync(order);

await _emailSender.SendOrderConfirmationAsync(order); // 💥 slow operation!

return order;

}

}How could we alleviate this issue to help offset the load on the monolith?

Evolving the architecture to utilize messaging 📬

As we seek to improve our application, we’ll add a message queue and worker service to this sample use case to offload the email sending process from the web application to a background service. With these 3 components in place, we’ve now implemented the foundation for the Web-Queue-Worker architecture.

Updating the previous sample with messaging ➡️

In our e-commerce sample, we can use a message queue to handle the sending of order confirmation notifications. Instead of sending emails directly within the CreateOrderHandler, we can publish an event to the message queue after the order is created.

public class CreateOrderHandler : IRequestHandler<CreateOrderCommand, Order>

{

private readonly IMessageSender _messageSender;

private readonly IRepository<Order> _repository;

public CreateOrderHandler(IMessageSender messageSender, IRepository<Order> repository)

{

_messageSender = messageSender;

_repository = repository;

}

public async Task<Order> Handle(CreateOrderCommand request, CancellationToken cancellationToken)

{

var order = new Order

{

UserId = request.UserId,

Items = request.Items,

Total = request.Total

};

await _repository.CreateAsync(order);

// Publish an event to message queue rather than sending an email ✅

await _messageSender.Publish(new OrderCreatedEvent(order.Id));

return order;

}

}In this example, the OrderCreatedEvent is published to the message queue after the order is created. A separate worker service is subscribed to this event and handles the email sending process asynchronously. That consuming code may look something like this if you’re using NServiceBus:

public class OrderCreatedEventHandler : IHandleMessages<OrderCreatedEvent>

{

private readonly IEmailSender _emailSender;

private readonly IRepository<Order> _repository;

public OrderCreatedEventHandler(IEmailSender emailSender, IRepository<Order> repository)

{

_emailSender = emailSender;

_repository = repository;

}

public async Task Handle(OrderCreatedEvent message, IMessageHandlerContext context)

{

var order = await _repository.GetByIdAsync(message.OrderId);

await _emailSender.SendOrderConfirmationAsync(order);

}

}There you have it! We now have a clear separation of concerns between our web application and the background processing of order confirmation notifications.

A note about distributed monoliths 🤯

Notice I haven’t mentioned any advanced techniques like separate databases per service, orchestrated sagas, or event sourcing. Be careful when designing your applications in this way so that you don’t build a distributed big ball of mud which is significantly worse situation to be in than a single monolithic mess. These sample applications are cohesive enough that you can imagine the web and worker applications’ source code might live together in the same repository or monorepo, while a microservices architecture might typically involve more separation. The complexity of managing things like deployments and versioning in distributed systems can quickly outweigh the benefits, so it’s essential to keep things as simple as possible where it makes sense, but also be diligent to not end up in a bad situation architecturally.

A note about self-hosted endpoints in web applications 🗒️

It’s worth noting that some frameworks, like NServiceBus, allow you to host message endpoints within your web application. This can simplify the architecture by reducing the number of services you need to manage, but it also introduces some trade-offs in terms of scalability and separation of concerns. Since self-processing of messages in this way doesn’t offload any of the performance issues to another application, I generally don’t take this approach unless there is a compelling reason to do so.

Conclusion 🧠

Hopefully this article has helped build your knowledge of the Web-Queue-Worker architecture style. By understanding its principles and best practices, you can make informed decisions when designing your applications for scalability and maintainability. As developers and architects it’s important to be aware the options available to you. This is especially important as your application grows and evolves over time. In my experience, this architecture style strikes a good balance between complexity and flexibility, making it a solid choice for many applications that need to grow beyond a monolithic design. If your team would like to explore this architecture and NServiceBus further, please reach out to NimblePros as we offer consulting services to help you implement these patterns effectively. 🎉